Prometheus-Operator:快速部署篇

Prometheus-Operator:快速部署篇

用户1107783

发布于 2023-09-11 11:04:51

发布于 2023-09-11 11:04:51

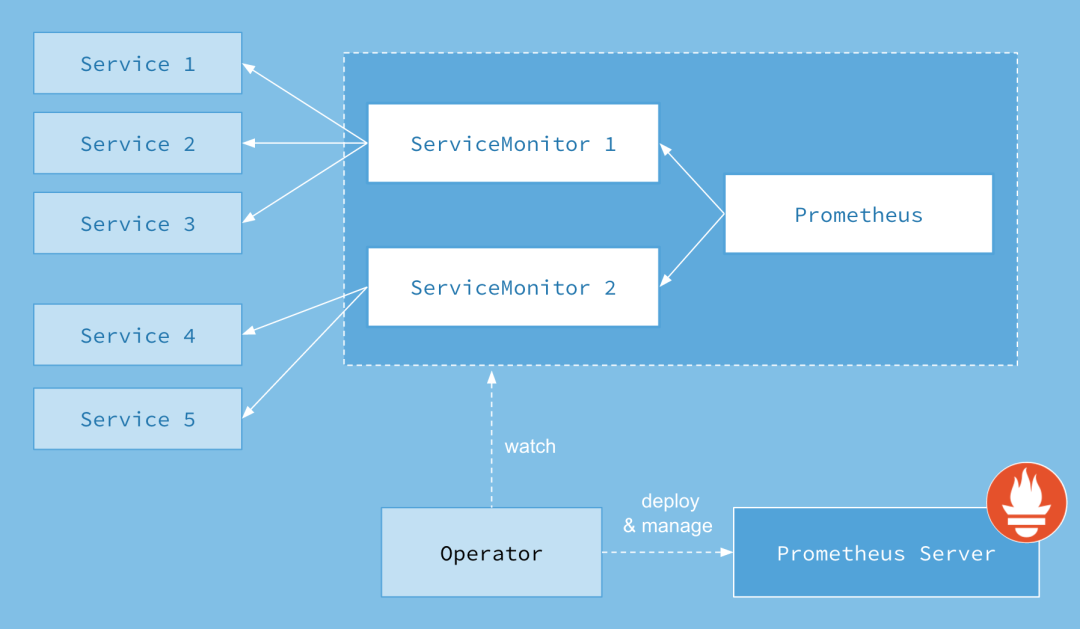

Prometheus Operator 是一个 Kubernetes 的运算符(Operator),它用于简化在 Kubernetes 上部署、管理和操作 Prometheus 及相关组件的过程。

Prometheus Operator 提供了一种声明式的方式来定义和管理 Prometheus 实例、ServiceMonitors、Alertmanagers 和其他与 Prometheus 相关的资源。它使用自定义资源定义(Custom Resource Definitions,CRDs)来扩展 Kubernetes API,并通过控制器(Controller)管理这些资源的生命周期。

以下是 Prometheus Operator 的一些主要功能和概念:

- Prometheus 实例管理: Prometheus Operator 允许你通过创建 Prometheus 自定义资源(Prometheus CRD)来定义和部署 Prometheus 实例。你可以指定监测目标、配置规则和警报规则等信息。

- ServiceMonitor 管理: 通过创建 ServiceMonitor 自定义资源,你可以定义要由 Prometheus 监测的服务和端点。Prometheus Operator 将根据这些定义自动配置 Prometheus 实例来收集指标。

- Alertmanager 管理: Prometheus Operator 还支持创建和管理 Alertmanager 实例。你可以使用 Alertmanager CRD 来定义警报规则并配置警报通知的接收者。

- 自动发现和自动配置: Prometheus Operator 使用 Kubernetes 的标签查询机制和服务发现特性,从而实现自动发现和配置需要监测的目标。当你添加或删除带有特定标签的 Pod 或服务时,Prometheus Operator 将相应地更新 Prometheus 配置。

- 水平伸缩和高可用性: Prometheus Operator 具有内置的水平伸缩支持,可以自动根据工作负载的变化调整 Prometheus 实例的数量。此外,它还支持在集群中自动部署多个实例以实现高可用性。

使用 Prometheus Operator 可以简化 Prometheus 的运维过程,并提供了一种基于 Kubernetes 原生特性的方式来管理和监控应用程序。它使得在 Kubernetes 集群中部署和管理 Prometheus 变得更加方便、灵活和可靠。

其中Prometheus资源描述了 Prometheus部署,而ServiceMonitor和PodMonitor资源 描述prometheus监控的服务

版本支持

kube-prometheus版本 | kubenetes版本 |

|---|---|

release-0.4 | 1.16、1.17 |

release-0.5 | 1.18 |

release-0.6 | 1.18、1.19 |

release-0.7 | 1.19、1.20 |

release-0.8 | 1.20、1.21 |

release-0.9 | 1.21、1.22 |

release-0.10 | 1.22、1.23 |

release-0.11 | 1.23、1.24 |

main | 1.24 |

参考连接:https://github.com/prometheus-operator/kube-prometheus#compatibility

快速开始

环境概览

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master-50.57 Ready control-plane,master 77d v1.20.5

k8s-node-50.58 Ready <none> 77d v1.20.5

k8s-node-50.59 Ready <none> 77d v1.20.5

下载kube-prometheus

git clone -b release-0.8 https://github.com/prometheus-operator/kube-prometheus.git

### 由gitee下载

git clone -b release-0.8 https://gitee.com/root_007/kube-prometheus.git

# ll

总用量 184

-rw-r--r-- 1 root root 679 8月 3 13:58 build.sh

-rw-r--r-- 1 root root 3039 8月 3 13:58 code-of-conduct.md

-rw-r--r-- 1 root root 1422 8月 3 13:58 DCO

drwxr-xr-x 2 root root 4096 8月 3 13:58 docs

-rw-r--r-- 1 root root 2051 8月 3 13:58 example.jsonnet

drwxr-xr-x 7 root root 4096 8月 3 13:58 examples

drwxr-xr-x 3 root root 28 8月 3 13:58 experimental

-rw-r--r-- 1 root root 237 8月 3 13:58 go.mod

-rw-r--r-- 1 root root 59996 8月 3 13:58 go.sum

drwxr-xr-x 3 root root 68 8月 3 13:58 hack

drwxr-xr-x 3 root root 29 8月 3 13:58 jsonnet

-rw-r--r-- 1 root root 206 8月 3 13:58 jsonnetfile.json

-rw-r--r-- 1 root root 4857 8月 3 13:58 jsonnetfile.lock.json

drwxr-xr-x 2 root root 6 8月 3 13:58 kube-prometheus

-rw-r--r-- 1 root root 4437 8月 3 13:58 kustomization.yaml

-rw-r--r-- 1 root root 11325 8月 3 13:58 LICENSE

-rw-r--r-- 1 root root 2101 8月 3 13:58 Makefile

drwxr-xr-x 3 root root 4096 8月 3 13:58 manifests

-rw-r--r-- 1 root root 126 8月 3 13:58 NOTICE

-rw-r--r-- 1 root root 38246 8月 3 13:58 README.md

drwxr-xr-x 2 root root 187 8月 3 13:58 scripts

-rw-r--r-- 1 root root 928 8月 3 13:58 sync-to-internal-registry.jsonnet

drwxr-xr-x 3 root root 17 8月 3 13:58 tests

-rw-r--r-- 1 root root 808 8月 3 13:58 test.sh

部署

部署文件清单

# tree manifests/

manifests/

├── alertmanager-alertmanager.yaml

├── alertmanager-podDisruptionBudget.yaml

├── alertmanager-prometheusRule.yaml

├── alertmanager-secret.yaml

├── alertmanager-serviceAccount.yaml

├── alertmanager-serviceMonitor.yaml

├── alertmanager-service.yaml

├── blackbox-exporter-clusterRoleBinding.yaml

├── blackbox-exporter-clusterRole.yaml

├── blackbox-exporter-configuration.yaml

├── blackbox-exporter-deployment.yaml

├── blackbox-exporter-serviceAccount.yaml

├── blackbox-exporter-serviceMonitor.yaml

├── blackbox-exporter-service.yaml

├── grafana-dashboardDatasources.yaml

├── grafana-dashboardDefinitions.yaml

├── grafana-dashboardSources.yaml

├── grafana-deployment.yaml

├── grafana-serviceAccount.yaml

├── grafana-serviceMonitor.yaml

├── grafana-service.yaml

├── kube-prometheus-prometheusRule.yaml

├── kubernetes-prometheusRule.yaml

├── kubernetes-serviceMonitorApiserver.yaml

├── kubernetes-serviceMonitorCoreDNS.yaml

├── kubernetes-serviceMonitorKubeControllerManager.yaml

├── kubernetes-serviceMonitorKubelet.yaml

├── kubernetes-serviceMonitorKubeScheduler.yaml

├── kube-state-metrics-clusterRoleBinding.yaml

├── kube-state-metrics-clusterRole.yaml

├── kube-state-metrics-deployment.yaml

├── kube-state-metrics-prometheusRule.yaml

├── kube-state-metrics-serviceAccount.yaml

├── kube-state-metrics-serviceMonitor.yaml

├── kube-state-metrics-service.yaml

├── node-exporter-clusterRoleBinding.yaml

├── node-exporter-clusterRole.yaml

├── node-exporter-daemonset.yaml

├── node-exporter-prometheusRule.yaml

├── node-exporter-serviceAccount.yaml

├── node-exporter-serviceMonitor.yaml

├── node-exporter-service.yaml

├── prometheus-adapter-apiService.yaml

├── prometheus-adapter-clusterRoleAggregatedMetricsReader.yaml

├── prometheus-adapter-clusterRoleBindingDelegator.yaml

├── prometheus-adapter-clusterRoleBinding.yaml

├── prometheus-adapter-clusterRoleServerResources.yaml

├── prometheus-adapter-clusterRole.yaml

├── prometheus-adapter-configMap.yaml

├── prometheus-adapter-deployment.yaml

├── prometheus-adapter-roleBindingAuthReader.yaml

├── prometheus-adapter-serviceAccount.yaml

├── prometheus-adapter-serviceMonitor.yaml

├── prometheus-adapter-service.yaml

├── prometheus-clusterRoleBinding.yaml

├── prometheus-clusterRole.yaml

├── prometheus-operator-prometheusRule.yaml

├── prometheus-operator-serviceMonitor.yaml

├── prometheus-podDisruptionBudget.yaml

├── prometheus-prometheusRule.yaml

├── prometheus-prometheus.yaml

├── prometheus-roleBindingConfig.yaml

├── prometheus-roleBindingSpecificNamespaces.yaml

├── prometheus-roleConfig.yaml

├── prometheus-roleSpecificNamespaces.yaml

├── prometheus-serviceAccount.yaml

├── prometheus-serviceMonitor.yaml

├── prometheus-service.yaml

└── setup

├── 0namespace-namespace.yaml

├── prometheus-operator-0alertmanagerConfigCustomResourceDefinition.yaml

├── prometheus-operator-0alertmanagerCustomResourceDefinition.yaml

├── prometheus-operator-0podmonitorCustomResourceDefinition.yaml

├── prometheus-operator-0probeCustomResourceDefinition.yaml

├── prometheus-operator-0prometheusCustomResourceDefinition.yaml

├── prometheus-operator-0prometheusruleCustomResourceDefinition.yaml

├── prometheus-operator-0servicemonitorCustomResourceDefinition.yaml

├── prometheus-operator-0thanosrulerCustomResourceDefinition.yaml

├── prometheus-operator-clusterRoleBinding.yaml

├── prometheus-operator-clusterRole.yaml

├── prometheus-operator-deployment.yaml

├── prometheus-operator-serviceAccount.yaml

└── prometheus-operator-service.yaml

1 directory, 82 files

执行编排文件

kubectl apply -f manifests/setup/

kubectl apply -f manifests/

首先创建名称空间和CRDs,避免在部署其他组件时出现竞争

查看pod状态

# kubectl get pod -n monitoring

NAME READY STATUS RESTARTS AGE

alertmanager-main-0 2/2 Running 0 21m

alertmanager-main-1 2/2 Running 0 21m

alertmanager-main-2 2/2 Running 0 21m

blackbox-exporter-55c457d5fb-4ldl5 3/3 Running 0 21m

grafana-9df57cdc4-qqqdl 1/1 Running 0 21m

kube-state-metrics-657b8c7649-sprs5 3/3 Running 0 3m23s

node-exporter-n4qp9 2/2 Running 0 21m

node-exporter-nm9hm 2/2 Running 0 21m

node-exporter-rzxbb 2/2 Running 0 21m

prometheus-adapter-59df95d9f5-hj75h 1/1 Running 0 21m

prometheus-adapter-59df95d9f5-qcpbq 1/1 Running 0 21m

prometheus-k8s-0 2/2 Running 1 21m

prometheus-k8s-1 2/2 Running 1 21m

prometheus-operator-7775c66ccf-qfsxh 2/2 Running 0 21m

# 出现如上状态,表示安装成功

注:镜像下载失败参考https://github.com/DaoCloud/public-image-mirror该项目做映射,或者使用其他的下载方式,当然如果有魔法可以使用魔法打败魔法

查看创建的CRD

# kubectl get crd | grep monitoring.coreos.com

alertmanagerconfigs.monitoring.coreos.com 2023-08-03T06:12:40Z

alertmanagers.monitoring.coreos.com 2023-08-03T06:12:40Z

podmonitors.monitoring.coreos.com 2023-08-03T06:12:40Z

probes.monitoring.coreos.com 2023-08-03T06:12:40Z

prometheuses.monitoring.coreos.com 2023-08-03T06:12:40Z

prometheusrules.monitoring.coreos.com 2023-08-03T06:12:41Z

servicemonitors.monitoring.coreos.com 2023-08-03T06:12:41Z

thanosrulers.monitoring.coreos.com 2023-08-03T06:12:41Z

CRD说明:

alertmanagerconfigs:

- 该CRD定义了alertmanager配置的一部分,主要是定义告警路由相关

alertmanagers:

- 该CRD定义了在k8s集群中运行的Alertmanager的配置,同样提供了多种配置,包含持久化存储。

- 对于每个Alertmanager资源,Operator都会在相同的名称空间中部署一个对应配置的sts,Alertmanager pod被配置为一个包含名为alertmanager-name的secret,该secret以alertmanager.yaml为key的方式保存使用的配置文件

podmonitors:

- 该CRD用于定义如何监控一组动态pod,使用标签来定义那些pod被选择进行监控。

probes:

- 该CRD用于定义如何监控一组Ingress和静态目标,除了target之外,Probe对象还需要一个Prober,它是监控的目标并为prometheus提供指标的服务,例如可以通过使用blackbox-exporter来提供这个服务

prometheuses:

- 该CRD声明定义了Prometheus期望在k8s集群中运行的配置,提供了配置选项来配置副本、持久化、报警等

- 对于每个Prometheus CRD资源,Operator都会以sts形式在形同的名称空间下部署对应配置,proemtheus pod的配置是通过一个包含prometheus配置的名为prometheus-name的secret对象声明挂载的

- 该CRD根据标签选择来指定部署到prometheus实例应该覆盖那些ServiceMonitors,然后Operator会根据包含的ServiceMonitor生成配置,并在包含配置的secret中进行更新

prometheusrules:

- 用于配置prometheus的rule规则文件,包括recording rule和alerting,可以自动被prometheus加载

servicemonitors:

- 该CRD定义了如何监控一组动态的服务,使用标签来定义那些service被选择进行监控

- 为了让Prometheus金控k8s内的任何应用,需要存在一个Endpoints对象,Endpoints对象本质上时IP地址的列表,通常Endpoints对象是由Service对象自动填充的,Service对象通过标签选择器匹配pod,并将其添加到Endpoints对象中,一个Service可以暴露一个或多个端口,这些端口由多个Endpoints列表支持,这些端点一般情况下都是指向一个pod

- 注意:endpoints是ServiceMonitor CRD中的字段,Endpoints是k8s的一种对象

thanosrulers:

- 该CRD定义了一个Thanos Ruler组件的配置,以方便在k8s集群中运行,通过Thanos Ruler,可以跨多个Proemtheus实例处理记录和报警规则

- 一个ThanosRuler实例至少需要一个queryEndpoint,它指向Thanos Queriers或prometheus实例的位置,queryEndpoints用于配置Thanos运行时的--query参数

验证

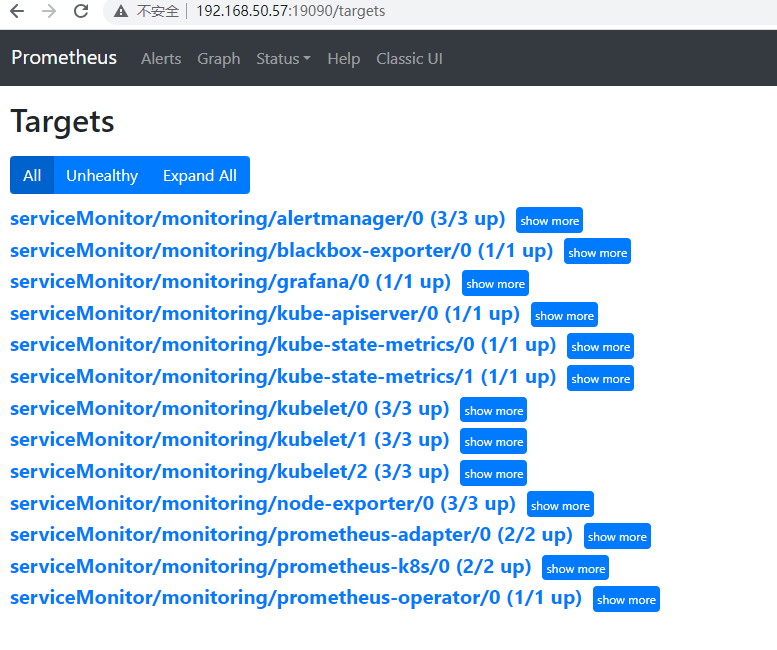

访问Prometheus

# 这里属于一个Demo,咱们就通过kubectl port-forward 做个端口映射,生产环境可以通过NodePort或者Ingress进行对外暴露

[root@k8s-master-50.57 ~/prometheus/aa/kube-prometheus/manifests] eth0 = 192.168.50.57

# kubectl --namespace monitoring port-forward svc/prometheus-k8s --address 0.0.0.0 19090:9090

Forwarding from 0.0.0.0:19090 -> 9090

那接下来,咱们就访问一下Prometheus吧!如下图:

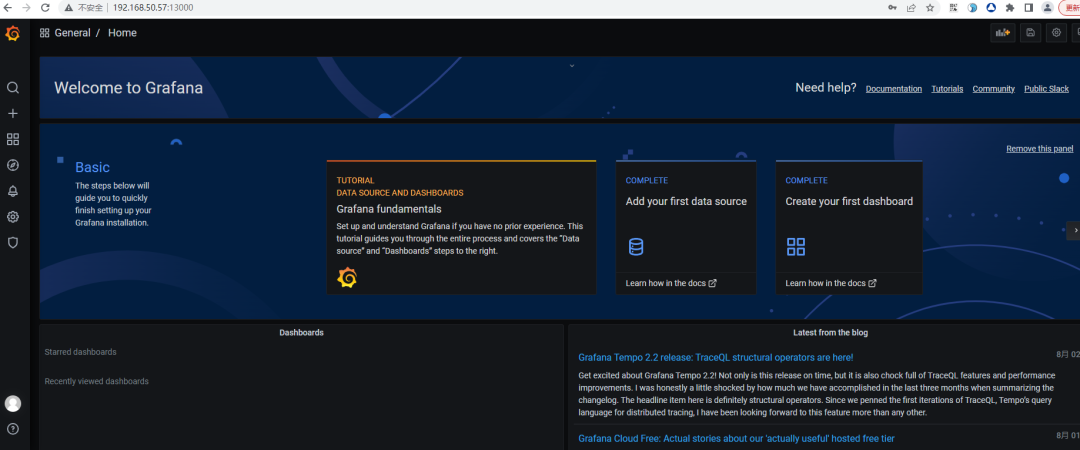

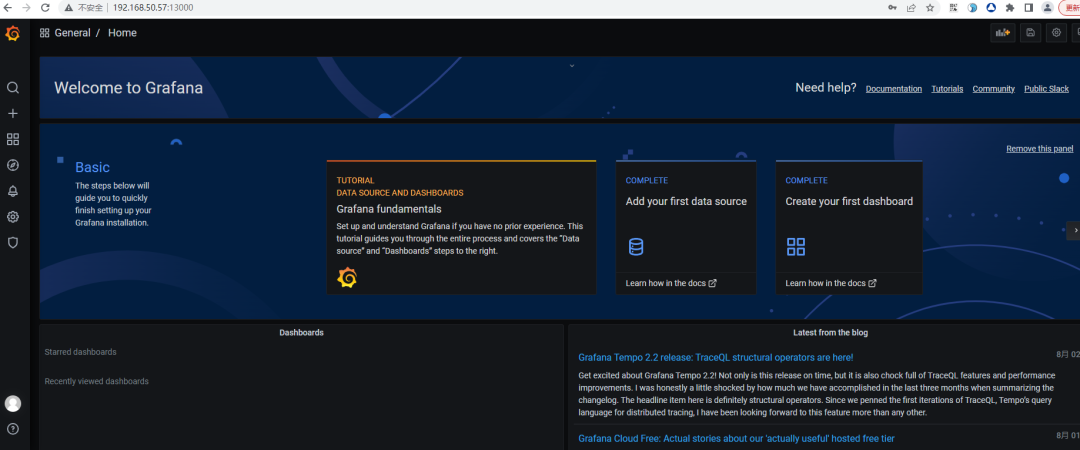

访问Grafana

[root@k8s-master-50.57 ~/prometheus/aa/kube-prometheus/manifests] eth0 = 192.168.50.57

# kubectl --namespace monitoring port-forward svc/grafana --address 0.0.0.0 13000:3000

Forwarding from 0.0.0.0:13000 -> 3000

那接下来,咱们就访问一下Grafana吧!如下图:

注:默认账号密码:admin/admin

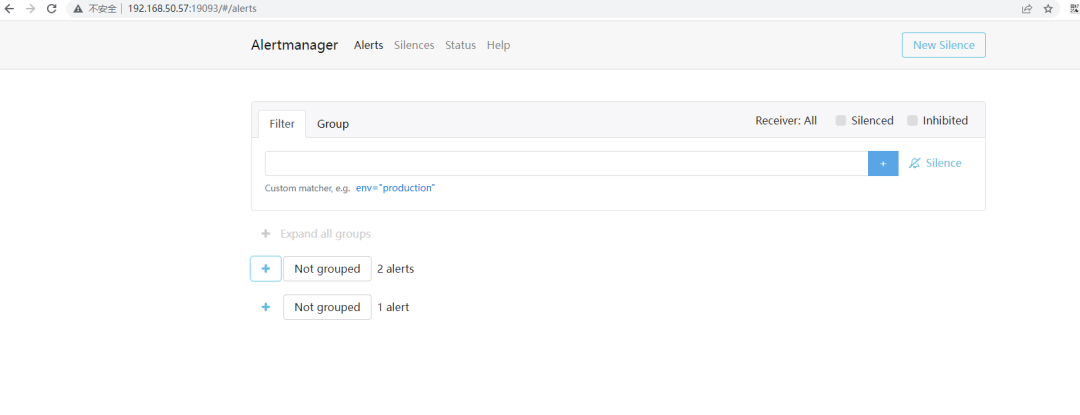

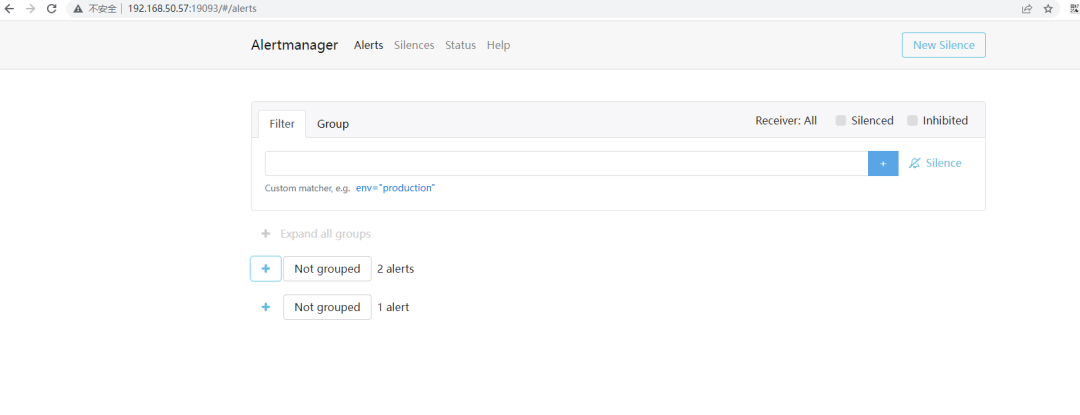

访问Alertmanager

[root@k8s-master-50.57 ~/prometheus/aa/kube-prometheus/manifests] eth0 = 192.168.50.57

# kubectl --namespace monitoring port-forward svc/alertmanager-main --address 0.0.0.0 19093:9093

Forwarding from 0.0.0.0:19093 -> 9093

那接下来,咱们就访问一下Alertmanager吧!如下图:

修改访问方式

修改grafana的service类型为NodePort

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: grafana

app.kubernetes.io/name: grafana

app.kubernetes.io/part-of: kube-prometheus

app.kubernetes.io/version: 7.5.4

name: grafana

namespace: monitoring

spec:

type: NodePort

ports:

- name: http

port: 3000

targetPort: http

selector:

app.kubernetes.io/component: grafana

app.kubernetes.io/name: grafana

app.kubernetes.io/part-of: kube-prometheus

[root@k8s-master-50.57 ~/prometheus/aa/kube-prometheus/manifests] eth0 = 192.168.50.57

# kubectl get svc -n monitoring | grep grafana

grafana NodePort 200.96.25.92 <none> 3000:30966/TCP 44m

修改prometheus的service类型为NodePort

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: prometheus

app.kubernetes.io/name: prometheus

app.kubernetes.io/part-of: kube-prometheus

app.kubernetes.io/version: 2.26.0

prometheus: k8s

name: prometheus-k8s

namespace: monitoring

spec:

type: NodePort

ports:

- name: web

port: 9090

targetPort: web

selector:

app: prometheus

app.kubernetes.io/component: prometheus

app.kubernetes.io/name: prometheus

app.kubernetes.io/part-of: kube-prometheus

prometheus: k8s

sessionAffinity: ClientIP

[root@k8s-master-50.57 ~/prometheus/aa/kube-prometheus/manifests] eth0 = 192.168.50.57

# kubectl get svc -n monitoring | grep prometheus-k8s

prometheus-k8s NodePort 200.108.12.8 <none> 9090:32024/TCP 46m

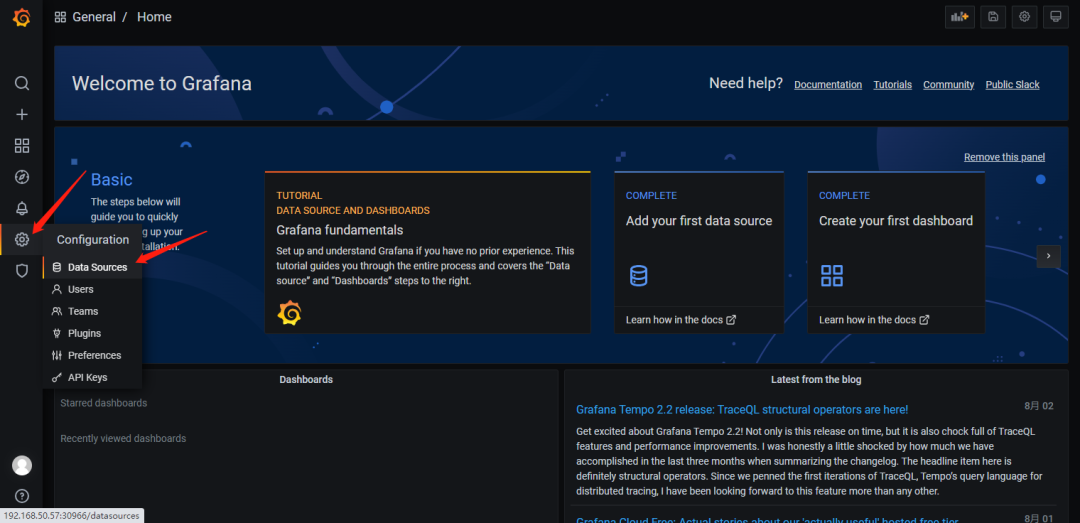

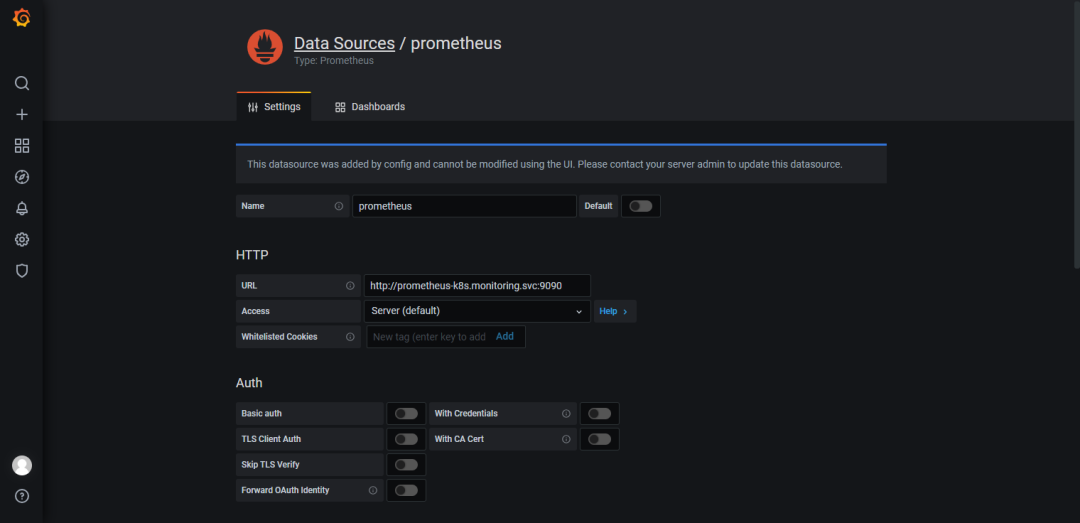

Grafana对接Prometheus

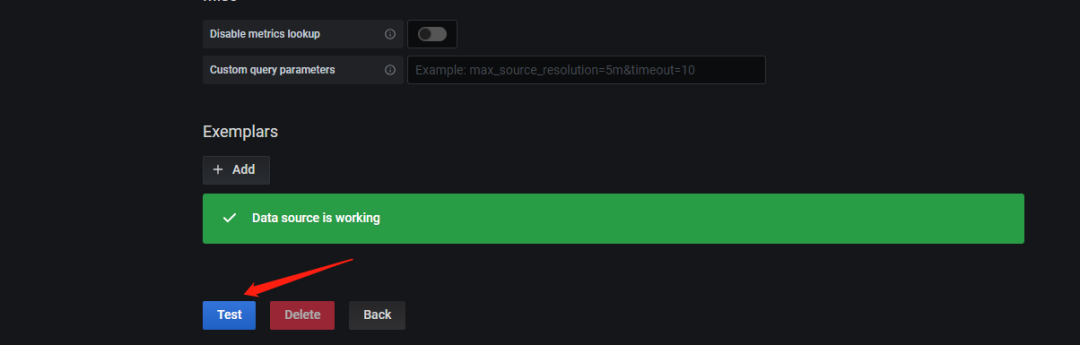

输入Prometheus连接信息

点击Test出现Data source is working即为成功

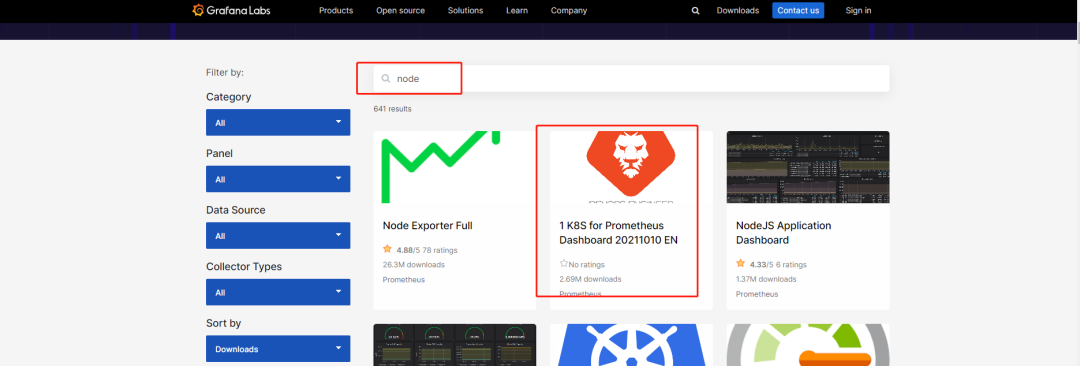

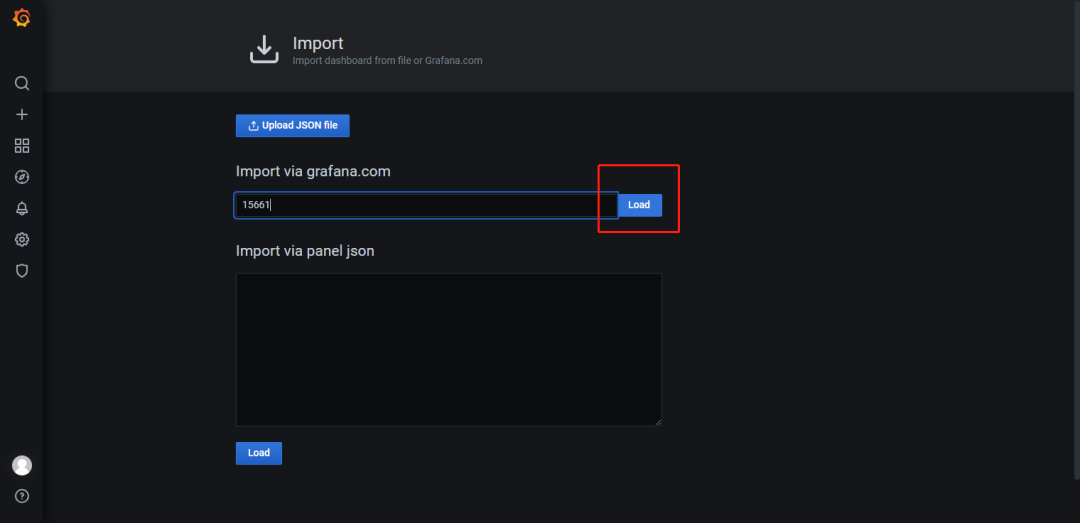

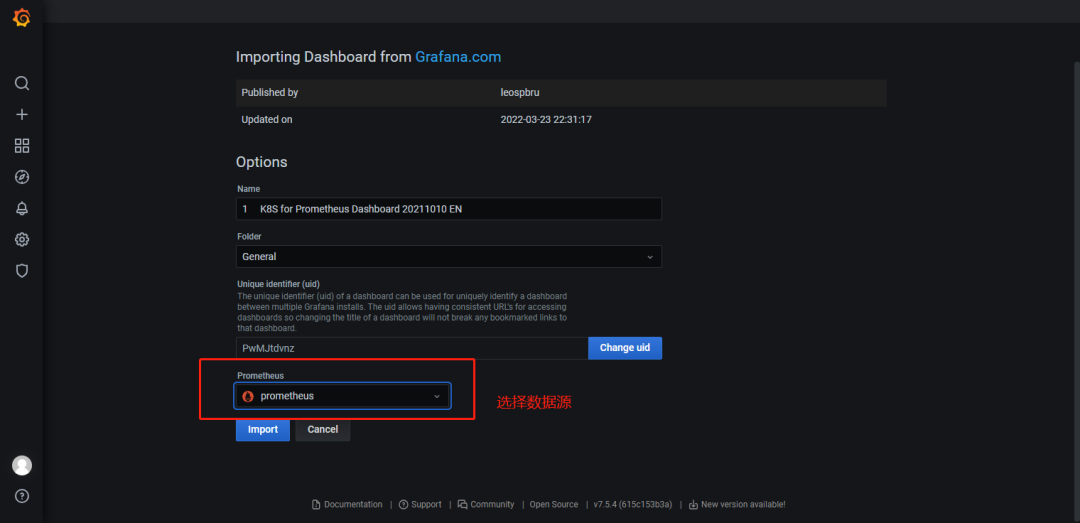

Grafana加载Dashboard

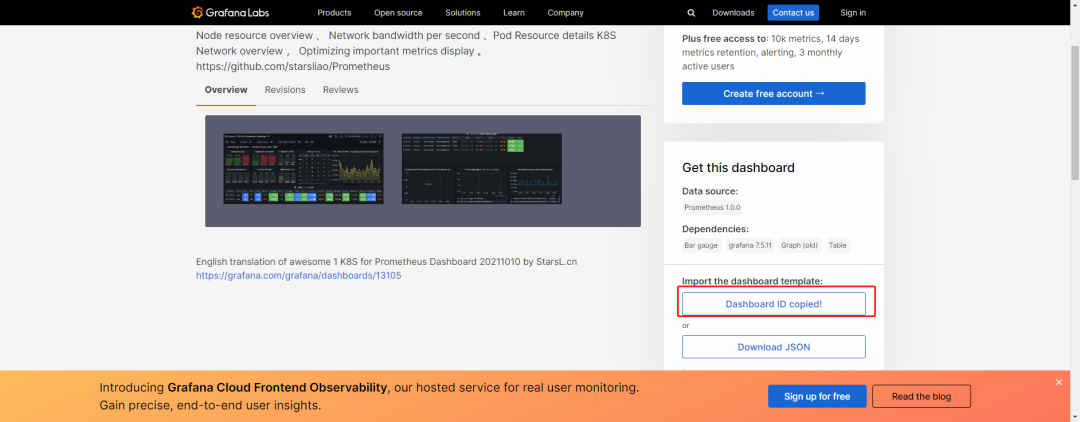

拷贝dashboard面板ID,当然也可以下载该dashboard对应的json文件

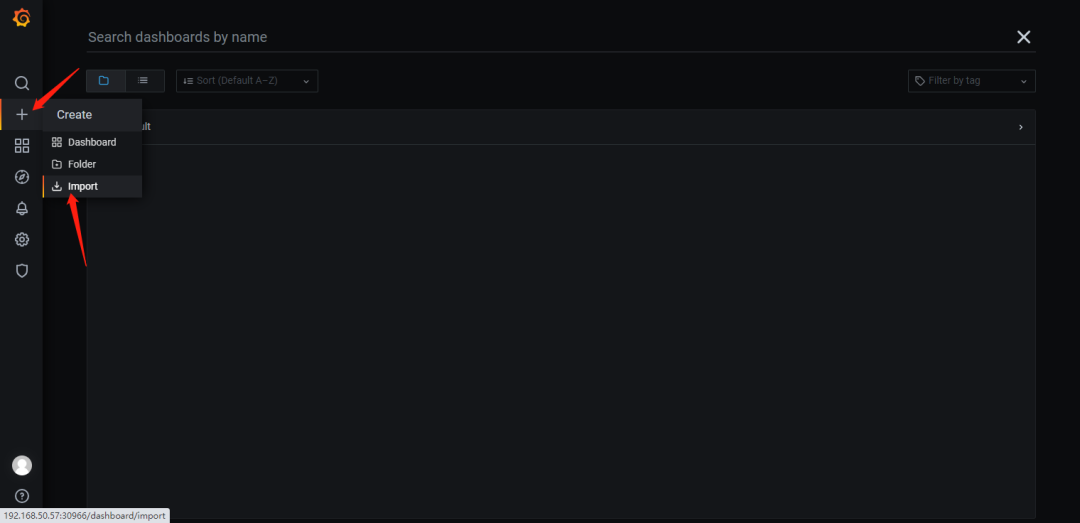

然后到Grafana控制台进行导入模版

点击Load

本次案例使用的是ID,当然也可以使用加载json文件的方式。

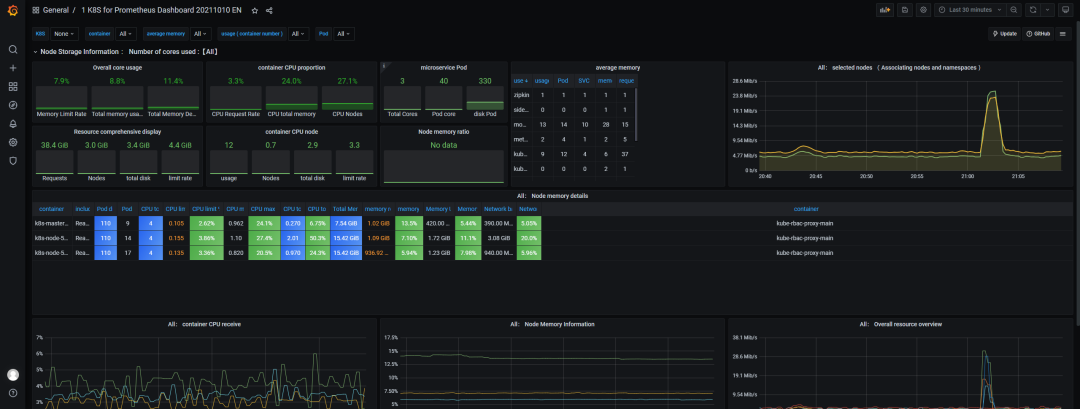

导入成功了,咱们可以看下效果,如下图!

总结

本文章讲解了如何通过Prometheues Opeartor方式进行快速部署Prometheues以及Grafana模版使用等内容,下期内容:讲解Promethues自定义监控项,请敬请期待!

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2023-08-09,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录