第一步:开通节点出站访问

ES访问公网第三方服务,您需要先开通“节点出站访问”:

第二步:创建 Inference 推理端点

如下是自定义 Inference 的参考模板:

PUT _inference/text_embedding/test-text-embedding{"service": "custom","service_settings": {"secret_parameters": {"api_key": "<some api key>"},"url": "...endpoints.huggingface.cloud/v1/embeddings","headers": {"Authorization": "Bearer ${api_key}","Content-Type": "application/json"},"request": "{\\"input\\": ${input}}","response": {"json_parser": {"text_embeddings":"$.data[*].embedding[*]"}}}}

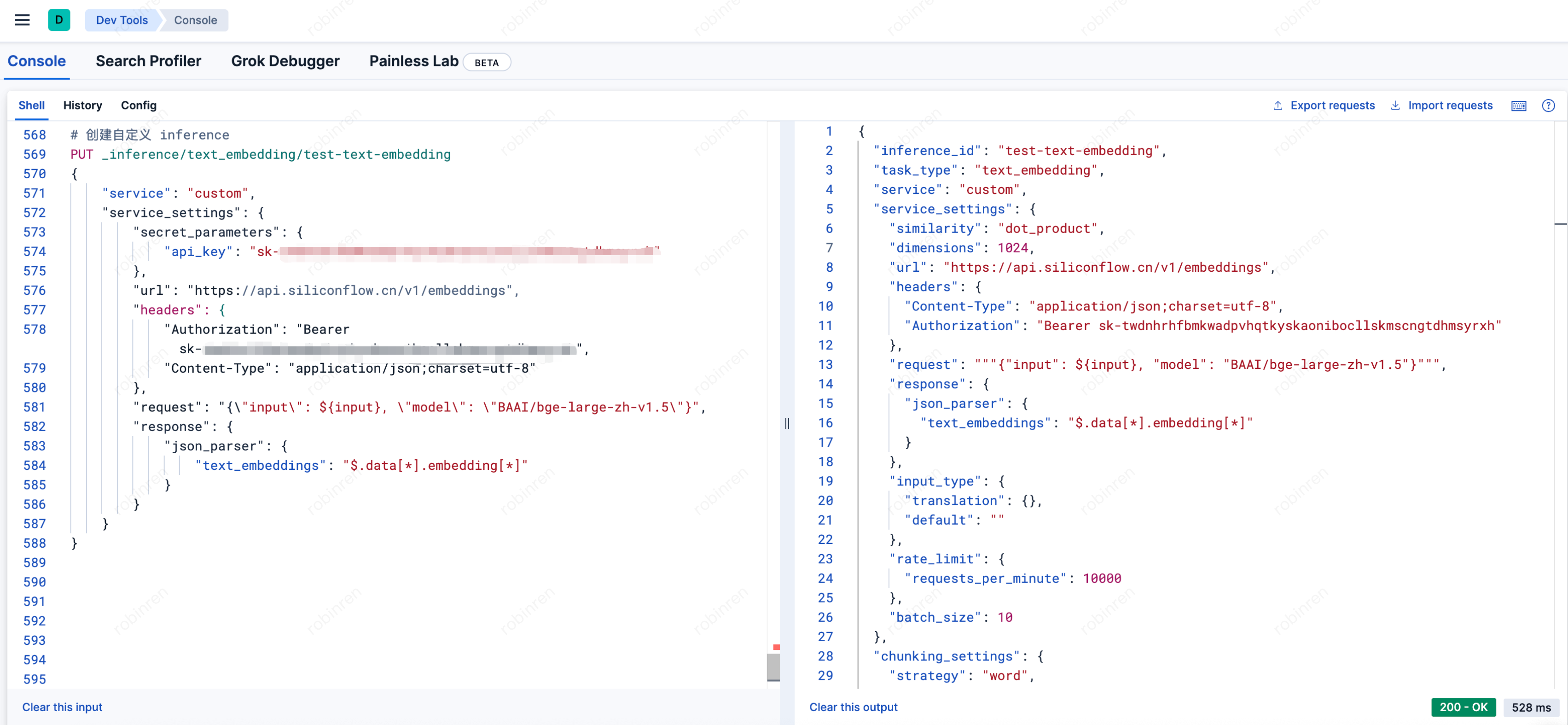

比如这里是一个使用硅基流动的 embedding 服务的示例,可以参考模板填入 API Key 与模型名称:

PUT _inference/text_embedding/test-text-embedding{"service": "custom","service_settings": {"secret_parameters": {"api_key": "sk-您的真实API Key"},"url": "https://api.siliconflow.cn/v1/embeddings","headers": {"Authorization": "Bearer sk-您的真实API Key","Content-Type": "application/json;charset=utf-8"},"request": "{\\"input\\": ${input}, \\"model\\": \\"BAAI/bge-large-zh-v1.5\\"}","response": {"json_parser": {"text_embeddings": "$.data[*].embedding[*]"}}}}

执行后返回如下信息即表示创建成功:

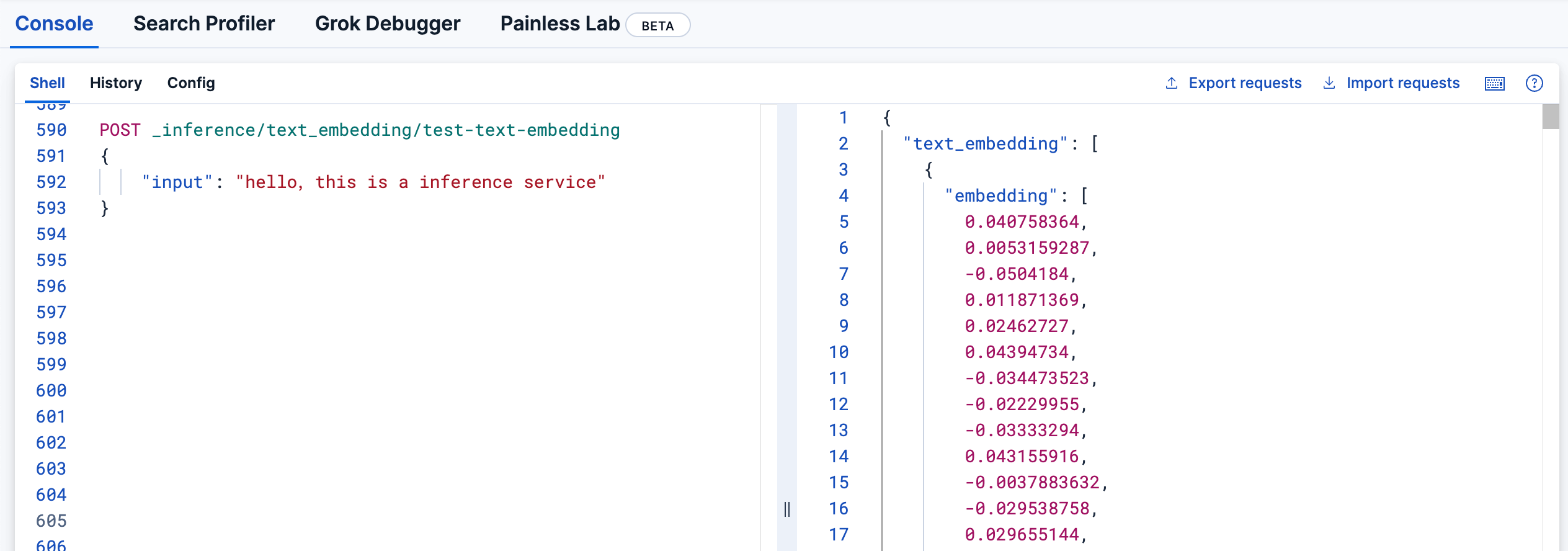

第三步:验证推理服务

您可通过如下语句进行调用验证,成功的话应返回 embedding 之后的结果:

POST _inference/text_embedding/test-text-embedding{"input": "hello,this is a inference service"}